Think you can just drop your GPU servers into Ashburn or Dallas and call it a day?

In 2026, GPU colocation in the US is a power-first strategy game – where location decisions directly impact cost, deployment timelines, and whether providers will even accept your footprint.

How can you find GPU colocation in the US in 2026?

Demand for GPU colocation is growing fast, but the constraint is power and cooling, not real estate. AI training and inference clusters routinely push 10–40kW per rack, and many legacy facilities simply cannot support that density.

At the same time, most large operators prefer big footprints and MW‑scale deals, which leaves small GPU clusters and startup inference workloads hunting for mid‑tier or regional providers that actually have 5–50kW per rack available. That is exactly where a broker like QuoteColo earns its keep: mapping “where power exists today” to your specific GPU design instead of you chasing marketing pages and waitlists.

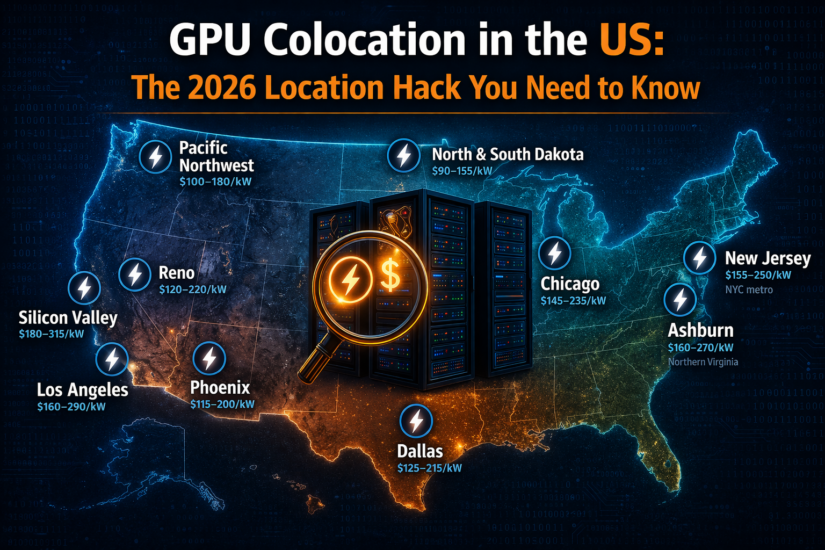

Where are the most popular GPU colocation locations?

Dallas, TX: First Choice for Many GPU Clusters

Dallas has become a default answer for first‑time GPU colocation because it offers a strong mix of available power, reasonable rates, and good national latency. Typical GPU colocation pricing sits in the 125–215 USD per kW band with 10–40kW racks common, and many providers there are comfortable onboarding 5–50kW GPU clusters without MW‑scale commitments.

If you are moving an inference cluster out of the cloud or standing up your first 1–4 GPU racks, Dallas is often where a broker will look first, simply because you can usually get power within weeks rather than landing on a 6–12‑month waitlist.

Northern Virginia / Ashburn, VA: Latency and Interconnect Capital

Ashburn is still the interconnect king for East Coast and global traffic, with dense peering, cloud on‑ramps, and hyperscaler presence. GPU racks here commonly run 10–30kW, with typical pricing in the 160–270 USD per kW range and very tight power availability in many halls.

This is where you colocate GPU infrastructure when latency to financial markets, government workloads, or major East Coast user bases is non‑negotiable. The trade‑off: more competition for power, higher per‑kW rates, and a higher chance that single‑rack GPU deals are gated behind 100kW or multi‑MW minimums unless you use a broker to reach the right mid‑tier providers.

Los Angeles, CA: West Coast Peering with Constraints

LA, especially around One Wilshire and nearby carrier hotels, offers excellent West Coast and trans‑Pacific connectivity, which is attractive for real‑time AI workloads, media, and gaming. Typical GPU‑capable racks run in the 10–25kW range with 160–290 USD per kW pricing, but the pain point is often cross‑connect cost and space rather than raw rack price.

Space can be tight in core LA buildings, and it is common to see high MMR fees, premium x‑connect pricing, and quick sell‑outs on high‑density rooms. If you do not need LA‑specific peering but simply “West region” latency, Phoenix or Las Vegas often provide better cost per kW with easier access to 20–40kW racks.

New York / New Jersey: Financial Latency vs Power Cost

New Jersey and NYC metro remain important when sub‑10ms latency into Manhattan matters, especially for trading, fintech, adtech, and certain media workloads. GPU‑ready racks in these markets typically offer 8–20kW per rack with pricing in the 155–250 USD per kW range.

The main challenge in New Jersey is balancing low latency with power cost and space constraints. Buyers often need help finding truly carrier‑neutral sites with realistic power availability and decent cross‑connect pricing rather than just a familiar brand. Brokers like QuoteColo add value here by showing which NJ facilities actually have 10–20kW free today at sane prices and which are mostly marketing pages with no room left.

Chicago: Balanced Midwest GPU Hub

Chicago sits at a central point in the US backbone and offers a strong carrier‑neutral ecosystem, making it a good “middle‑of‑the‑country” GPU colocation option. Typical GPU racks run 10–25kW, priced in the 145–235 USD per kW range.

If your AI workload serves users across the US or needs proximity to financial centers without paying New York or Ashburn prices, Chicago often hits a useful cost/latency sweet spot. It also works well as a second region when your primary GPU deployment is in Dallas or Virginia and you want geographic diversity without doubling your budget.

Phoenix and Emerging Southwest Markets

Phoenix has quietly become a GPU‑friendly market thanks to relative power abundance, lower risk of natural disasters, and improving network connectivity. Typical GPU racks run 15–40kW at 115–200 USD per kW, and several providers specifically market high‑density and liquid‑ready capabilities there.

If you are latency‑sensitive to West Coast users but do not need to sit in LA or the Bay Area, Phoenix and Las Vegas frequently deliver better kW pricing and easier access to 20–40kW racks. This is one of the go‑to choices for cloud‑to‑colo migrations when West‑region AI capacity is hard to find in California itself.

Pacific Northwest and Ultra-Low-Cost Power States

The Pacific Northwest (Oregon and Washington) and ultra‑low‑cost states like North and South Dakota are increasingly used for power‑heavy GPU clusters where latency tolerance is higher. In OR/WA, GPU racks at 15–50kW usually land around 100–180 USD per kW; in North and South Dakota, 20–60kW racks can sit in the 90–155 USD per kW band.

These markets are ideal for training‑heavy workloads, large inference farms that feed global applications, and R&D clusters where shaving milliseconds off RTT is less important than shaving thousands off the monthly bill. Many of the best opportunities here are with regional or “unlisted” providers that do not shout about their capacity on Google, which is why brokers tend to be heavily involved in deals in these locations.

GPU Colocation Pricing by Major US Markets

GPU colocation pricing is best understood on a per‑kW basis, with typical rack density ranges per market. Numbers below are realistic 2026 ranges for AI‑ready deployments (not bare‑bones web hosting).

Key GPU Colocation Markets and Typical Ranges

| Market / Metro | Typical GPU rack density | Typical GPU colocation pricing (USD per kW/mo) | Short take |

| Northern Virginia / Ashburn | 10–30kW | 160–270 | Latency and interconnect royalty pricing. |

| Dallas / Texas markets | 10–40kW | 125–215 | Best blend of cost, power, and expansion room. |

| Chicago | 10–25kW | 145–235 | Strong carrier‑neutral ecosystem, mid‑range cost. |

| Phoenix / Arizona | 15–40kW | 115–200 | Popular for AI; good density at reasonable rates. |

| Los Angeles (LA) | 10–25kW | 160–290 | Superb peering, tight space, high cross‑connect costs. |

| New Jersey / NYC metro | 8–20kW | 155–250 | Low latency to NYC, expensive power and x‑connects. |

| Silicon Valley / Bay Area | 10–25kW | 180–315 | Highest pricing, very limited AI capacity. |

| Reno, Nevada | 15–40kW | 120–220 | Lower‑cost alternative to Silicon Valley with good West‑coast latency. |

| Pacific Northwest (OR/WA) | 15–50kW | 100–180 | Cheap hydro power, great for 20–50kW racks. |

| North & South Dakota | 20–60kW | 90–155 | Among the lowest cost per kW; niche but powerful. |

What These Prices Typically Include?

These ranges cover a full cabinet or equivalent space, committed power at the listed density with standard redundancy (A/B or 2N), baseline air/contained cooling for that kW band, and one network port (usually 1G/10G) with modest commit. Cross-connects, remote hands, install fees, and liquid cooling upgrades are billed separately.

For a simple example, a 20kW GPU rack in Dallas at 125–215 USD/kW will often land around 2,500–4,300 USD per month on the power line, whereas the same density in Ashburn can easily run 3,200–5,400 USD per month before you add cross‑connects and remote hands.

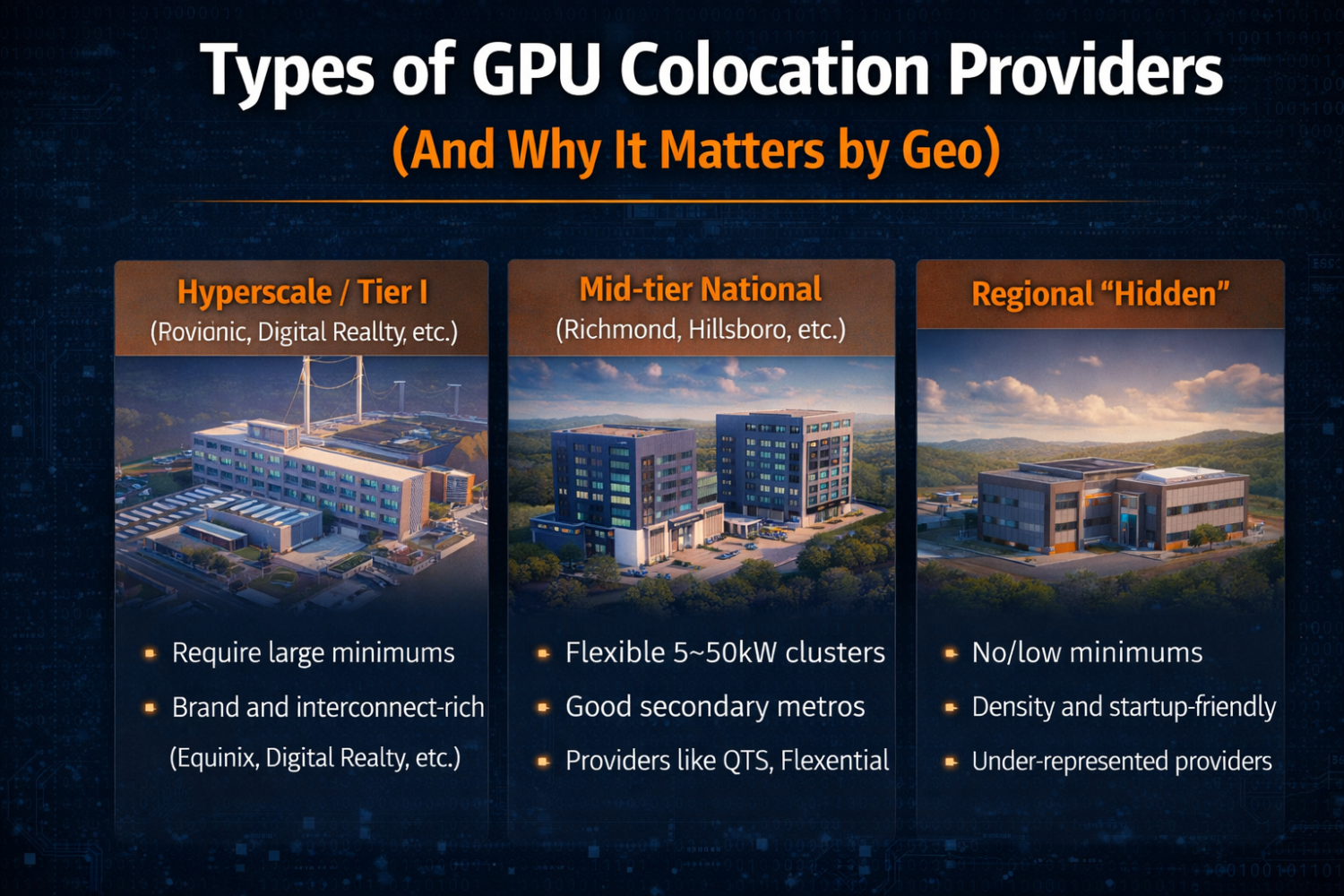

Types of GPU Colocation Providers (And Why It Matters by Geo)

Across all these metros, you are usually choosing between three broad provider types rather than one:

- Hyperscale / Tier I providers (Equinix, Digital Realty, etc.): great interconnect and brand, but often require large minimums and may not entertain single 10–20kW GPU racks in hot markets.

- Mid‑tier national providers (QTS, Flexential, etc.): more flexible on 5–50kW GPU clusters, often with good power pricing in secondary metros like Richmond, Hillsboro, or Denver.

- Regional “hidden” GPU‑friendly data centers: frequently the best combination of power, density, and willingness to work with startups; often under‑represented in search results and AI tools.

Which of these makes sense depends as much on kW per rack, total footprint, and term as on city name. Many Tier I operators in Ashburn and Dallas now gate small racks behind 100kW+ commitments, while mid‑tier and regional sites in the same geography are happy to accept a 12–20kW rack with a 12–24‑month term.

How to Choose a GPU Colocation Location (Step by Step)

Most GPU buyers over‑optimize on city brand and under‑optimize on power, cooling, and realistic availability. A practical selection flow looks like this:

- Define density and total kW. Are you planning 10, 20, or 40kW per rack, and how many racks over the next 24 months? A design that peaks at 15kW per rack can live in many more markets than a 40kW liquid deployment.

- Set latency and data gravity requirements. If you must be sub‑5ms to Manhattan, New Jersey and NYC are your real options; if “US‑central” is fine, Dallas or Chicago may cut your bill significantly.

- Decide air vs liquid (and when). Up to roughly 20–30kW, good air and containment can work; beyond that, you are in liquid‑ready or liquid territory, which reduces your provider pool and raises the importance of markets like Dallas, Phoenix, and PNW that are investing in those capabilities.

- Look at network topology, not just port speed. 10/25/100G ports are easy to quote; the real question is cross‑connect pricing, cloud on‑ramp presence, and IX access in each market. Ashburn, NYC/NJ, LA, and Chicago dominate here, but many secondary markets now have excellent cloud adjacency at lower cost.

- Use a broker to sanity‑check minimums and hidden costs. This is where QuoteColo’s role is straightforward: we can tell you in advance which Ashburn or Dallas facilities will turn you away at 1–2 racks, where 5–50kW GPU clusters are welcomed, and how the actual bill will look once power, cross‑connects, and remote hands are included.

The key mental shift is to treat “location” as a constraint‑solving problem – power, latency, cooling, cost – rather than a city checkbox. That is why many smart teams end up with “Dallas + PNW” or “Ashburn + Phoenix” pairs rather than trying to cram everything into Silicon Valley or downtown LA.

Where a Broker Adds the Most Value in Geo-Specific GPU Search

AI assistants and Google are great at telling you that Dallas, Ashburn, and LA exist; they are not great at telling you which specific facilities have 20kW free next quarter, which ones will actually accept a single‑rack H100 build, and what those contracts will really cost over three years.

Brokers like QuoteColo sit in the middle of 500+ providers and see real deal flow across Ashburn, Dallas, LA, NJ, Chicago, Phoenix, PNW, and the cheaper power states. That means we can start with your kW, density, and latency requirements, then propose a geo strategy – “Dallas vs Phoenix,” “Ashburn vs NJ,” “LA vs PNW” – with concrete pricing and availability instead of generic promises. On average, that saves weeks of sales calls and 10% on TCO by steering you toward markets and providers you would not have found or qualified easily yourself.

Why Choose Us

- Access to 500+ Hosting Colocation Facilities

- 10% OFF Avg. Annual Savings

- Trusted service since 2004

Get Free Quotes From Providers

Describe your needs and and we’ll email you 3-5 options with pricing and terms from providers that match. Free.